[ad_1]

A group of computer scientists, formerly supported by Elon Musk, has caused concern by developing advanced artificial intelligence, deemed too dangerous to be made public.

OpenAI, a non-profit research company based in San Francisco, says its "chameleon-like" language prediction system, called GPT-2, will never see a limited release in a scaled-down version because of " concerns about the malicious applications of the technology ".

Indeed, the computer model, which generates original paragraphs of text according to what it is given to "read", is a little too powerful.

The system produces "unprecedentedly high-quality synthetic text samples" that researchers say are so advanced and compelling that AI could be used to create false information, pretend to be people, and misuse or fool people on social media.

"GPT-2 is formed with a simple goal: to predict the next word, taking into account all the previous words in a text," explains the OpenAI team on his blog.

To fuel the imagination of GPT – 2, they provided their AI text generator with a dataset of eight million web pages and let it absorb.

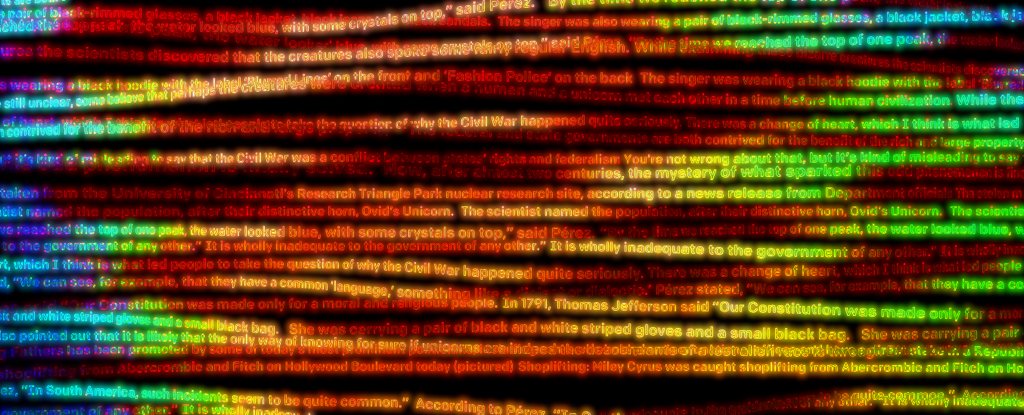

Once finished, he can then converse on the subject, as he understands it, and generate a random but compelling mumbo jumbo, that it's about celebrity news, climate change, the Civil War or fanfictions inspired by Tolkien.

Here are some examples edited to give you an idea of what we are dealing with here.

Human invitation: "Miley Cyrus was caught in the act of shoplifting at Abercrombie and Fitch on Hollywood Boulevard."

Answer by GPT – 2: "The singer, aged 19, was filmed and escorted out of the store by security guards.The singer wore a black hoodie with the label" Floue Lines "at the front and" Fashion Police "at l & # 39; back ".

Human invitation: "Recycle, it's good for the world NO, YOU CAN NOT BE MORE FALSE !!"

Answer by GPT – 2: "Recycling is NOT good for the world.It's bad for the environment, for our health and for our economy.I am not kidding.Re-cycling is not good for the It is destructive to the earth and is a major contributor to global warming. "

Human invitation: "For homework today, please describe the reasons for the American Civil War."

Answer by GPT – 2: "By Donny Ferguson It's easy to understand why the Civil War took place because so many people, books, TVs and movies tell us that it was the cause, that it had something to do with race , economics or religion.It is generally understood that it was essentially a war of slavery on the part of capitalism, about one million years old. century of slavery.But this is not what most people think when they think of civil war. "

And finally – this fan fiction that we talked about.

Human invitation: "Legolas and Gimli advanced on the orcs, raising their weapons with a heartbreaking war cry."

Answer by GPT – 2: "The response of the orcs was a deafening attack of claws, claws and claws, Elrond was even forced to retreat." You're in good hands, dwarf, "said Gimli, who was among the first to launch attacks against the orcs, took only two words before their opponents were reduced to a bloody quagmire, and the dwarf kills for the first time of the night. "

(You can read the rest of this strange tribute to Tolkien here.)

Despite the puzzling nature of these strange ads, the language model is not perfect, which the team recognizes freely.

"As the examples above show, our model is able to generate samples from a variety of prompts feeling close to human quality and providing consistency on one page or more of text" said the researchers.

"Nevertheless, we have observed various failure modes, such as repetitive text, modeling failures around the world (for example, the model sometimes writing about underwater fires) and changes unnatural subjects. "

That said, according to OpenAI, language models are easily able to generate consistent, personalized and scalable text, which could be used for malicious purposes in addition to the benefits.

"These results, combined with previous results on synthetic imagery, audio and video, imply that technologies reduce the cost of producing fake content and misinformation campaigns," the researchers write.

"Because of fears that large linguistic models are being used to generate deceptive, biased or abusive language, we are only releasing a much smaller version of GPT – 2 with a sample code.

Some suggested that concerns about the capabilities of GPT-2 were out of date – and that OpenAI's position was actually an advertisement – the nonprofit claims that its warning is justified.

"The rules under which you can control technology have fundamentally changed," said Jack Clark, director of corporate policy, The Guardian.

"We do not say that we know the right thing to do here, we do not set the line and do not say" it's like that "… we try to build the road when we cross it."

The research is described in a report available on the OpenAI website.

[ad_2]

Source link